AI startups are growing fast. Like, really fast. And with that growth comes a problem most founders don’t think about until it’s already causing trouble: infrastructure.

At first, things usually feel manageable. A few models, a handful of workflows, maybe one or two servers doing the heavy lifting. But then the product gains traction. More users come in. More data starts flowing. Your team adds new AI features, more automation, more integrations, and suddenly the setup that worked a few months ago starts feeling painfully small.

You’re running ML models, building automation pipelines, processing massive datasets, and suddenly your servers are screaming. Costs are climbing. Deployments take forever. Performance gets inconsistent right when you need reliability the most. Sound familiar?

That’s exactly where server virtualization starts to make a real difference.

Why AI Startups Can’t Ignore Virtualization Anymore

Here’s the thing about AI workloads: they’re unpredictable. One hour you’re idling, the next you’re spinning up inference jobs that need serious compute. Traditional bare-metal setups just aren’t built for that kind of elasticity.

Server virtualization lets you pool your physical resources and allocate them dynamically. Need more CPU for a training run? Done. Spin up a new VM in minutes. Scale back when you’re done without wasting hardware or over-provisioning “just in case.”

For startups, especially, this matters. You’re not sitting on a data center budget. Every dollar counts. Good virtualization infrastructure for startups means you get enterprise-grade flexibility without the enterprise-grade price tag, which is honestly the dream.

And the AI-specific stuff? GPU passthrough, low-latency networking for real-time inference, container-friendly environments for your ML pipelines, modern virtualization platforms handle all of it now.

Sangfor aSV: A Serious VMware Alternative Worth Knowing

Let’s talk about Sangfor aSV private cloud for a second, because it keeps coming up in conversations about private cloud virtualization for AI teams, and for good reason.

Most folks in IT have grown up with VMware. It works. But the licensing costs? Well, that’s brutal, and the complexity of managing a traditional VMware stack for a lean startup team? Even worse.

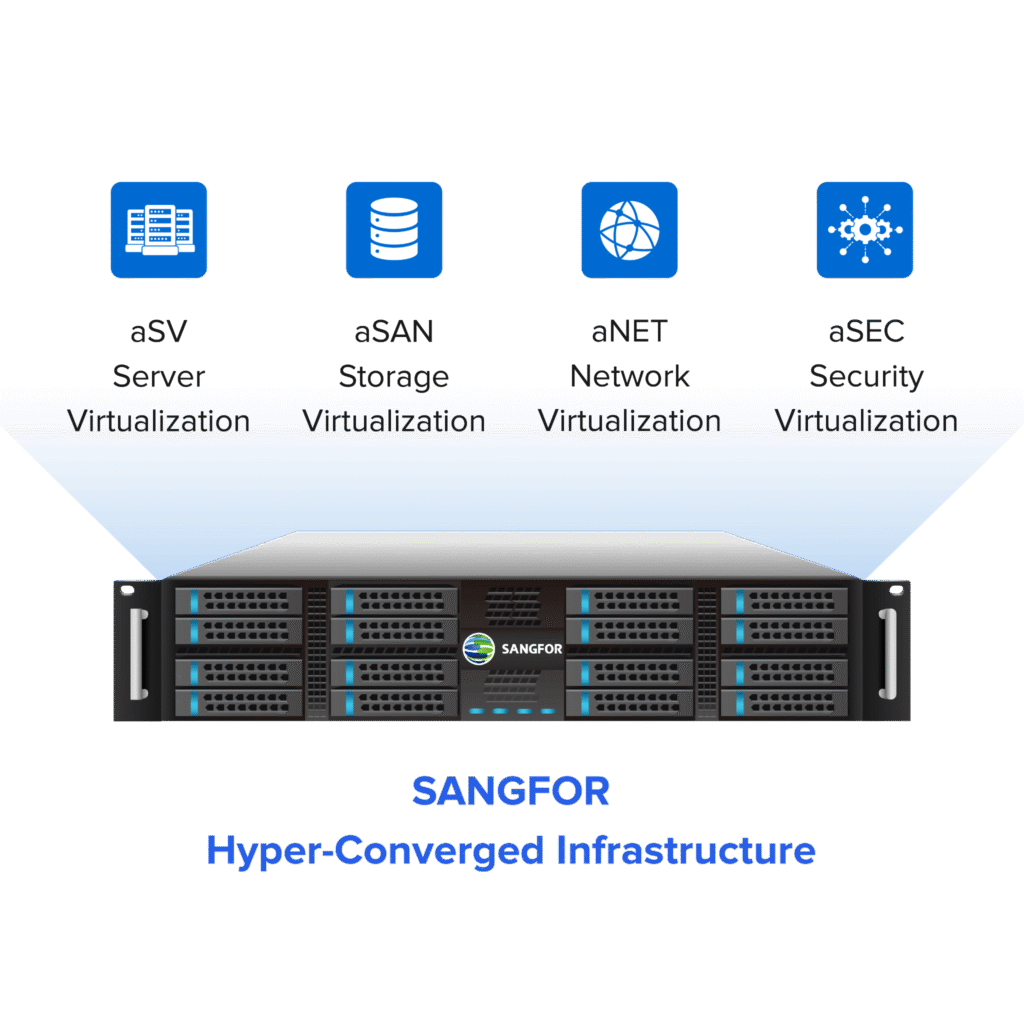

Sangfor aSV takes a different approach. It powers an all-around hyper-converged infrastructure (HCI) platform, meaning compute, storage, and networking are bundled into a single system you can actually manage without a dedicated ops team. One-click deployment. Built-in backup. Native zero-trust security baked in from the start.

What really stands out is the TCO. AI firms adopting Sangfor aSV are reportedly seeing over 50% reduction in total cost of ownership compared to legacy setups. And scaling? Three times faster than traditional approaches, according to early adopters. That’s not marketing fluff; that’s the kind of number that makes a CFO happy.

This shows Sangfor Cloud Infrastructure acts as If you’re actively researching one of the best vmware alternatives., Sangfor aSV deserves a serious look. No vendor lock-in, genuinely easier management, and the ROI story is compelling.

Enter n8n: Workflow Automation Built for AI Pipelines.

Okay, so you’ve got your virtualized infrastructure humming along. Now what? This is where n8n comes in. If you haven’t used it yet, it’s an open-source workflow automation tool, kind of like Zapier but built for technical teams who want full control.

You can wire together data ingestion pipelines, trigger model inference jobs, connect APIs, and handle webhooks, all with a visual editor and real code flexibility underneath.

For AI pipelines specifically, n8n is fantastic. Need to automate a chain where incoming data gets preprocessed, passed to an LLM, then the output routes to different systems based on confidence scores? n8n handles that. It’s container-friendly, scales well with compute bursts, and the community around it is genuinely active.

The natural pairing? Deploy n8n on server virtualization software that can match its workload demands, which brings us back to Sangfor aSV.

Deploying n8n on Sangfor aSV: How It Actually Works.

Here’s a practical walkthrough, minus the fluff. First, set up your Sangfor aSV cluster. The one-click HCI deployment is real. You’re not spending days configuring storage pools manually. Get your nodes joined, networking configured, and you’re ready.

Next, provision your VMs through the aSV console. If your n8n workflows will be triggering GPU-heavy inference jobs, this is where you enable GPU passthrough. Straightforward in the interface, massive performance difference in practice.

Install n8n via Docker. It’s the cleanest approach:

| docker run -it –rm \ –name n8n \ -p 5678:5678 \ -v ~/.n8n:/home/node/.n8n \ n8nio/n8n |

For persistent storage, point your volume mounts to Sangfor aSV’s distributed file system. This is important; you don’t want your workflow data living on ephemeral VM storage.

Then configure auto-scaling policies in aSV. When your n8n nodes start hitting CPU thresholds during peak automation runs, the platform scales resources automatically. Set your thresholds, define your scaling rules, and walk away.

Pro tip: Leverage Sangfor aSV’s bare metal hypervisor capabilities here. KVM-based, near-native performance, none of the overhead you’d get from hosted virtualization options. For node-intensive n8n workflows, this actually matters.

Can You Run n8n on a Bare Metal Hypervisor? Yes, and Here’s Why It’s Better

Yes. Sangfor aSV’s KVM-based architecture sits directly on the hardware. It’s a bare metal hypervisor. No host OS overhead. Your n8n containers get access to raw compute, which translates to faster workflow execution and lower latency on those AI inference chains.

Compare that to running on a hosted cloud VM where you’re essentially virtualization-inside-virtualization. There’s overhead at every layer. For virtualization for AI workloads where milliseconds matter, the bare metal approach wins.

Scaling n8n Workloads: The Real-World Picture

Here’s what actually happens when your AI startup starts growing.

Your n8n workflow volume goes from handling a few hundred executions daily to suddenly needing 10x that. With Sangfor aSV’s live migration and resource pooling, you’re redistributing load across your cluster without downtime. Workflows keep running. Users don’t notice. Your on-call engineer sleeps through the night.

That’s the promise of mature server virtualization, and Sangfor aSV delivers it without requiring a massive ops team to keep it running.

Getting Started

If you’re an AI startup trying to build a real infrastructure foundation without blowing your runway on hardware and licensing, the combination of Sangfor aSV and n8n is worth exploring seriously.

Start with the free trial at Sangfor.com. The demos are genuinely useful, not just sales theater. And if you’re migrating from a legacy setup, aSV’s built-in conversion tools make that less painful than you’d expect.

Scale your AI startup without limits. With the right virtualization layer underneath everything, that’s not just a tagline; it’s actually achievable.